We’ve all been there before. A product design decision is going round in circles. As fatigue and analysis-paralysis sets in, the team focuses on achieving a compromise that satisfies business or technical requirements rather than solving the user’s problem. The customer insight, gathered and communicated weeks previously, ends up a casualty in the drive to come to a resolution.

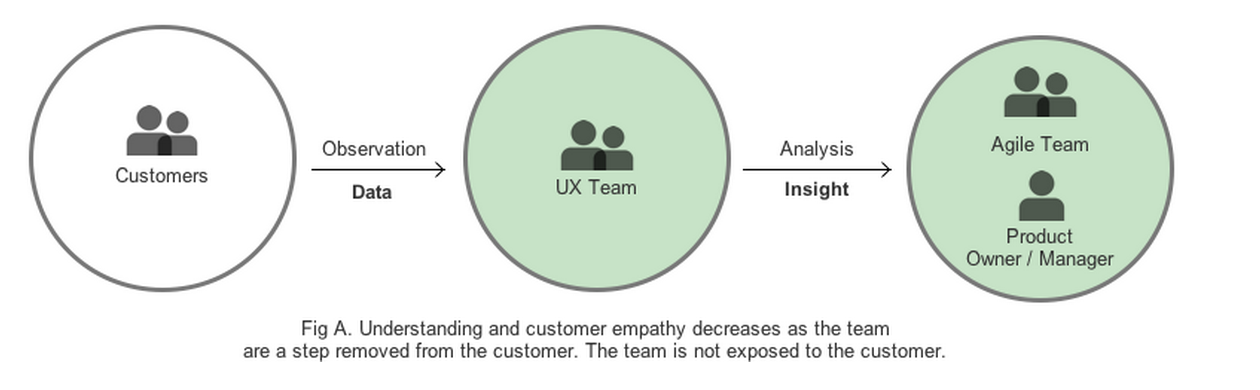

One of the challenges of product design is ensuring customer insight is baked into the design process. Typically it’s a researcher or other UX practitioner who gathers and communicates customer insight. However, this is only one input into the design process—product owners, developers, testers, and business analysts all contribute to the design process by bringing their own perspectives and priorities. Customer insight, business value and technical feasibility must all align.

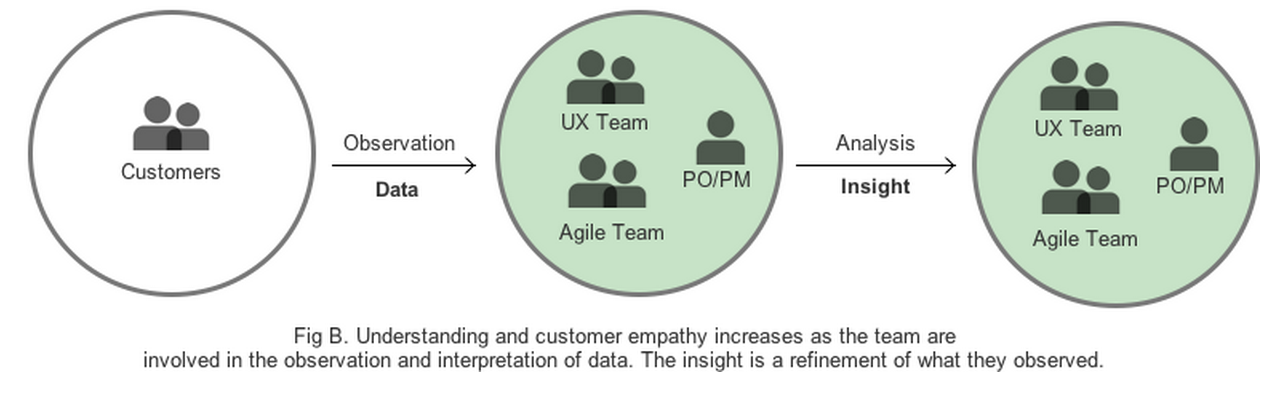

One of the key questions UX teams need to answer is this: how do we effectively imprint customer insights into the teams building the software? One answer, we’ve found, is to have our teams actively participate in the actual research by observing usability testing and providing feedback during the data analysis.

The past: the spoken word

In the past, researchers used to gather and analyze customer insight and communicate that information to product managers, business analysts, and other members of the UX team. We put a lot of emphasis on the research artifacts, and a lot of effort into presenting the research and the insights gained to developers and product managers.

Once that research was documented, it would spend the rest of its existence on a shared drive, only to see the light of day if and when someone thought to inquire of a researcher “Have we ever done any research on…” Over time this became a form of UX folklore, with customer insight only accessible to those inclined to seek out the researchers

Worse still, new designers forgot why a design decision was made, to the detriment of the customer experience. In one instance, a team removed a critical piece of microcopy, not realizing that there was both qualitative and quantitative data indicating its importance. This microcopy was an aid to content discovery; by removing it, the team inadvertently forced customers to adopt a less effective usage pattern.

Although our knowledge management, through a reliable Knowledge Management Process, has improved over time, and we employ reports, personas, and the occasional poster, it’s not enough. No matter how well we communicate customer insight, teams empathize with customers more when they see them in action. They remember what they saw, rather than simply relying on a researcher or designer to advocate for the user.

This is what brought us to considering a whole other side of research education, based on a simple question: if we can’t get developers interested in consuming customer insight, can we get them involved instead?

Carrying the fire

Active involvement does not just mean inviting designers or developers along only to observe testing, but also including them in interpreting and analyzing the research, which could also be with the aid of programs like that best business game.

Before research

Each sprint we devote a full day to research. Before research day, we explain to our agile team leads that they will need to make time to attend. Of course, it’s not easy to get an agile team committed to research time, and we don’t always succeed. In a recent session, our engineers were under pressure fixing bugs at the tail end of a sprint and could not attend.

To decrease the likelihood of research getting de-prioritized during a hectic 3-week sprint, we designate research as a “task” and add it to the backlog. We also try to arrange it so that it’s not happening at the end of a sprint; we know this can be a very busy time for developers.

Some things to consider before research day:

- Plan to balance the observers. It’s important to get a variety of people into the observation room. Our observation room in Dublin can only accommodate about 8 people; a good balance would consist of the product owner, an analyst, a developer, a UX architect, a UI designer, and a researcher, who serves as the group’s facilitator. One of the “good problems” we have at Paddy Power is that there is now too much demand for attendance on test day. To accommodate, we always record our user sessions, and we’re investigating options to stream a feed from the interview room.

- Communicate roles and responsibilities. If designers and developers are new to user research, they may not know what is expected of them as observers. The facilitator should review ground rules, answer any questions, and communicate the goals of this particular research session. When observers understand the research priorities and design direction, they can better focus on the goals of the research. This is particularly important during the early, uncertain stages of design.

- Focus on what’s important. While we encourage our observers to submit potential questions, it is with the understanding that the research team has the final say regarding whether questions are in or out. It’s vital to keep the questions pertinent to the goals of the research, and not muddy the waters with pet questions.

On research day

At Paddy Power, once we began inviting our agile teams to the research sessions we realized we needed to set some ground rules.

- We’re here for at least half the sessions. No drop ins. Minimum half day. If someone only watches one participant, their contribution to any discussion will be colored by what that one participant said and did, as opposed to the patterns that we recognize when we watch multiple participants.

- We are active observers. In the observation room, we get participants actively taking notes on Post-Its and not just sitting passively. These will be used to identify themes later, during analysis. To avoid distractions, we ask observers not to use their phones or laptops during observation.

- We wrap up at the end of each session, not the end of the day. We’ve found it’s key to record observations immediately after the user leaves. This avoids the peak-end phenomenon whereby people recall mostly what they saw at the end of the day.

- We include a facilitator in the observation room. A facilitator in the observation room keeps everything running smoothly and ensures that observers are focused on taking notes, rather than chatting or doing other work.

These rules ensure observers think critically about what they are observing. They also help us to begin analysis while conducting research, which accelerates the analysis that happens after research day.

After research day

Before we updated our process from the traditional waterfall methodology, analysis was conducted by a lone researcher. Instead of design improvements being incorporated immediately, they were treated as new backlog items, which would often be de-prioritized in favor of new feature development. Now, designers and developers are part of the analysis, and see the value of updating the product design to include the new information.

We conduct a final analysis with roughly four designers and researchers the day after conducting research, resulting in immediately actionable insight that we can use in the next sprint. When we’ve finished our final analysis, the insights are summarized in email and on the knowledge base. For some teams, this would be where research ended, but for us we leverage that research by including researchers, designers, and developers in the next phrase: design.

Our collaborative design sessions are a fast method of synthesizing a prototype out of our research. In a recent ideation workshop for a game app, we involved product managers, designers, analysts, and developers. The team built a customer journey that was both technically feasible and encouraged the customer to continue playing over multiple sessions. Compare this to our old process, where we would meet first with UX and business analysts, then with product management, then with design, and finally with development. The process might take weeks, and by the end we often forgot the research that had initially inspired us. In short, it was a slower process with a poorer outcome for the business and the customer.

In these design sessions we see:

- Higher confidence from non-designers. When discussing the overall design approach, the team now has an informed opinion of the user workflow.

- Informed decisions with the customer in mind. With the research fresh in our minds, we are able to reference the user’s actions rather than making assumptions. The team is also better able to prioritize the most critical failures.

- Faster consensus on design decisions. By working together, we’re simultaneously considering technical feasibility, business value, and the user experience. Our shared understanding allows us to achieve consensus easily, with fewer arguments.

Ultimately, our whole team is invested and on the same page. Information is in the open and everyone has a better understanding of the problem they’re trying to solve.

Everyone carries the fire

Something transformative happens when teams are involved in hands-on research. Less time is spent teaching—essentially explaining and repeating—and more time is spent applying. The team becomes more comfortable applying the insight, and is more willing to seek it out.

In addition, the research is held in the heads of many people in the team, not just UX practitioners. The customer perspective isn’t something that needs to be “represented” anymore and is instead a natural part of any conversation about the product. Over time, a store of knowledge is built up within the team that is no longer dependent on the researcher’s’ ability to effectively pass on information. Finally, the team is better equipped to apply this knowledge in the design solution and is more engaged in the product they are building.

This is a journey in progress. We want this to be something that all of our teams do all of the time rather than something some of our teams do some of the time. Here are a few steps that all teams can do to get started.

- Encourage agile teams to get involved in activities normally reserved for UX practitioners—research, ideation, sketch sessions, and the like.

- Embed UX practitioners in agile teams.

- Encourage teammates to be transparent about their assumptions and their reasoning.